Why PrivateGPT?

PrivateGPT provides an API (a tool for computer programs) that has everything you need to create AI applications that understand context and keep things private.

It’s like a set of building blocks for AI. This API is designed to work just like the OpenAI API, but it has some extra features.

So, if you’re already using the OpenAI API in your software, you can switch to the PrivateGPT API without changing your code, and it won’t cost you any extra money.

You can use PrivateGPT with CPU only. Forget about expensive GPU’s if you dont want to buy one

How to use PrivateGPT?

The documentation of PrivateGPT is great and they guide you to setup all dependencies.

You just need Python installed in your computer and then install the required packages.

This kind of projects normally have very specific set of packages dependencies (specific versions and so on), that is why it is recommended to use Python Package Management.

I have tried those with some other project and they worked for me 90% of the time, probably the other 10% was me doing something wrong.

To make sure that the steps are perfectly replicable for anyone, I bring you a guide with PrivateGPT & Docker to contain all the Dependencies (and make it work 100% of the times).

- You will be able to use the Docker image with PrivateGPT I created - Quick Setup

- You can also learn how to build your own Docker image to run PrivateGPT locally - Recommended

PrivateGPT with Docker

With this approach, you will need just one thing: get Docker installed

Then, use the following Stack to deploy it:

Using PrivateGPT with Docker 🐳 - PreBuilt Image

Before moving on - Remember to always check the source of the Docker Images you run. Consider building your own.

version: '3' services: ai-privategpt: image: fossengineer/privategpt # Replace with your image name and tag container_name: privategpt ports: - "8001:8001" volumes: - ai-privategpt:/app command: /bin/bash -c "poetry run python scripts/setup && tail -f /dev/null" #make run volumes: ai-privategpt:

That’s it, now get your favourite LLM model ready and start using it with the UI at: localhost:8001

Remember that you can use CPU mode only if you dont have a GPU (It happens to me as well).

Just remember to use models compatible with llama.cpp, as the project suggests.

PrivateGPT API

PrivateGPT API is OpenAI API (ChatGPT) compatible, this means that you can use it with other projects that require such API to work.

How to Build your PrivateGPT Docker Image

The best way (and secure) to SelfHost PrivateGPT. Build your own Image.

You will need the Dockerfile.

Private GPT to Docker with This Dockerfile

# Use the specified Python base image FROM python:3.11-slim # Set the working directory in the container WORKDIR /app # Install necessary packages RUN apt-get update && apt-get install -y \ git \ build-essential # Clone the private repository RUN git clone https://github.com/imartinez/privateGPT WORKDIR /app/privateGPT # Install poetry RUN pip install poetry # Copy the project files into the container COPY . /app #Adding openai pre v1 to avoid error RUN sed -i '/\[tool\.poetry\.dependencies\]/a openai="0.28.1"' pyproject.toml # Lock and install dependencies using poetry RUN poetry lock RUN poetry install --with ui,local # Run setup script #RUN poetry run python scripts/setup # this scripts download the models (the embedding and LLM models) # Keep the container running #CMD ["tail", "-f", "/dev/null"]

The setup script will download these 2 models by default:

- LLM: conversational model

- Embedding: the model that converts our documents to a vector DB

Use GGUF format for the models and it will be fine (llama.cpp related)

And then build your Docker image to run PrivateGPT with:

docker build -t privategpt .

#docker tag privategpt docker.io/fossengineer/privategpt:v1 #example I used

#docker push docker.io/fossengineer/privategpt:v1

docker-compose up -d #to spin the container up with CLI

Using your PrivateGPT Docker Image

You will need Docker installed and use the Docker-Compose Stack below.

If you are not very familiar with Docker, don’t be scared and install Portainer to deploy the container with GUI.

Then, you just need this Docker-Compose to deploy PrivateGPT

version: '3' services: ai-privategpt: image: privategpt # Replace with your image name and tag container_name: privategpt2 ports: - "8002:8001" volumes: - ai-privategpt:/app # environment: # - SOME_ENV_VAR=value # Set any environment variables if needed #command: tail -f /dev/null # environment: # - PGPT_PROFILES=local command: /bin/bash -c "poetry run python scripts/setup && tail -f /dev/null" #make run volumes: ai-privategpt:

When the server is started it will print a log Application startup complete.

Execute the comand make run in the container:

docker exec -it privategpt make run

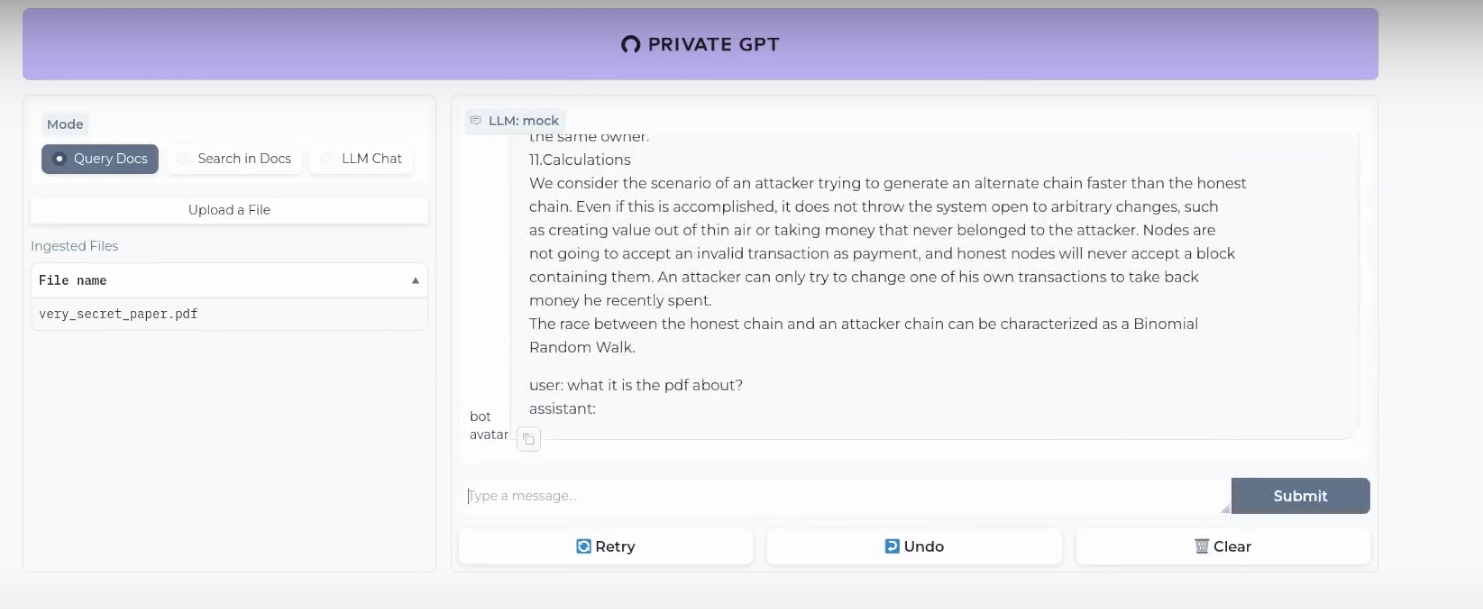

Navigate to http://localhost:8001 to use the Gradio UI or to http://localhost:8001/docs (API section) to try the API using Swagger UI.

FAQ

A Detailed Video - How to use PrivateGPT with Docker

What is happening inside PrivateGPT?

These are the guts of our PrivateGPT beast.

The Embedding Model will create the vectorDB records of our documents and then, the LLM will provide the replies for us.

- Embedding Model - BAAI/bge-small-en-v1.5

- Conversational Model (LLM) - TheBloke/Mistral 7B

- VectorDBs - PrivateGPT uses QDrant (F/OSS ✅)

- RAG Framework - PrivateGPT uses LLamaIndex (yeap, also F/OSS ✅)

You can check and tweak this default options with the settings.yaml file.

Python for PrivateGPT

What are Gradio Apps?

Gradio is an open-source Python library that simplifies the development of interactive machine learning (ML) and natural language processing (NLP) applications.

Gradio allows developers and data scientists to quickly create user interfaces for their ML models, enabling users to interact with models via web-based interfaces without the need for extensive front-end development.

Python Dependencies 101

When we are sharing software, we need to make sure that our PCs have the same libraries installed.

For Python particuarly (as AI/ML has a lot of leverage with this language), these should sounds familiar:

-

One Option we already saw. It might be an overkill, but it always works - Im talking about using Docker Containers with Python. Containers and SelfHosting plays well and will always work 🤘.

-

Other ways: When looking new AI Projects, dont be scared if you find any of these as they are common ways to install dependencies in Python.

- Conda provides a cross-platform and language-agnostic (not only Python, but R, Julia, C/C#…) solution for managing software environments and dependencies, making it especially valuable for complex projects involving multiple programming languages and libraries.

- Poetry is a tool for dependency management and packaging in Python. It allows you to declare the libraries your project depends on and it will manage (install/update) them for you.

- Python’s built-in venv (virtual environment) module is a powerful tool for creating isolated Python environments specifically for Python projects.

- Pipenv is a command-line tool that aids in Python project development.