Dify AI is an open-source, large language model app development platform that enables users to create a wide range of AI agents that can automate workflows.

Its recent update has revamped over 100,000 lines of code, incorporating a drag-and-drop UI that simplifies the development of large language model apps.

Users can visually debug nodes, import and export workflows, and integrate with other tools and plugins.

With Dify AI, you can create chatbots, workflows, and even entire software systems without writing a single line of code.

The Dify AI Project

- The Dify AI Project is fully open source and you can have a look to:

With Dify AI, no Code AI Workflows: Drag, Drop, Deploy. Do It For You.

Why Dify AI?

Dify AI provides a powerful platform for anyone looking to leverage AI capabilities in application development without the typical barriers associated with coding.

- Ease of Use: Create complex applications without needing extensive coding knowledge.

- Efficiency: Develop AI-powered applications quickly and efficiently.

- Seamless Integration: Easily integrate with a range of other tools and plugins.

- Focus on Logic: Concentrate on the application’s logic rather than the intricacies of coding.

SelfHosting Dify AI

Pre-Requisites!! Just Get Docker 🐋👇

You can install Docker for any PC/mac/Linux at home or in any cloud provider that you wish.

It will just take few moments, this one. If you are in Linux, just

apt-get update && sudo apt-get upgrade && curl -fsSL https://get.docker.com -o get-docker.sh

sh get-docker.sh

And install also Docker-compose with:

apt install docker-compose -y

And when the process finishes - you can use it to SelfHost other services as well.

You should see the versions with:

docker --version

docker-compose --version

#sudo systemctl status docker #and the status

git clone https://github.com/langgenius/dify.git

cd dify/docker

docker compose up -d

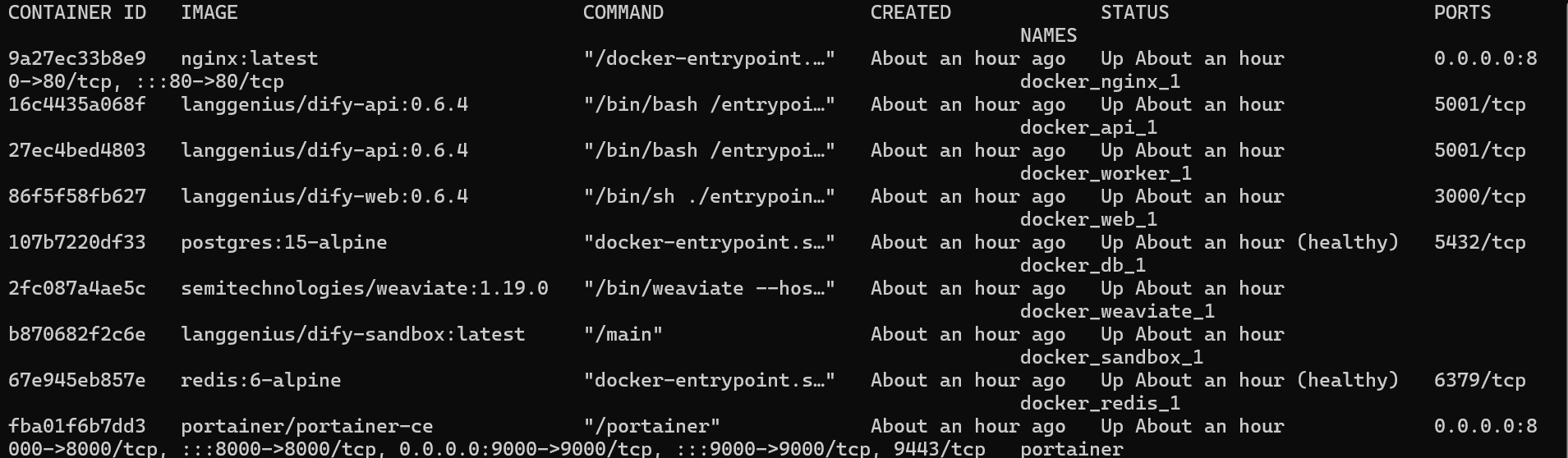

You can see that all the containers are ready with:

docker compose ps

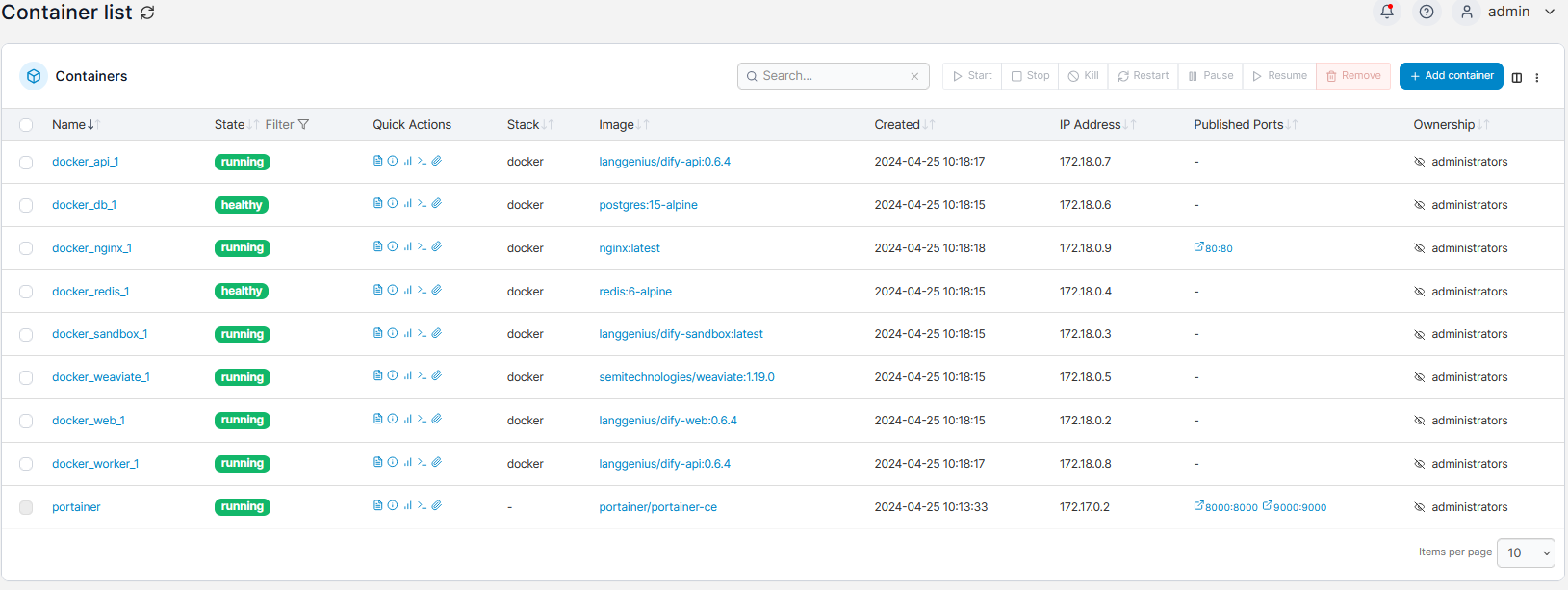

Or if you prefer a visual interface, use Portainer to see that Dify AI is ready to be used:

Now, you can access Dify AI at: localhost/install

Bonus - How to Upgrade Dify AI 👇

To upgrade Dify AI, just get the latest version of the repository and perform:

cd dify/docker

git pull origin main

docker compose down

docker compose pull

docker compose up -d

How to use Dify AI

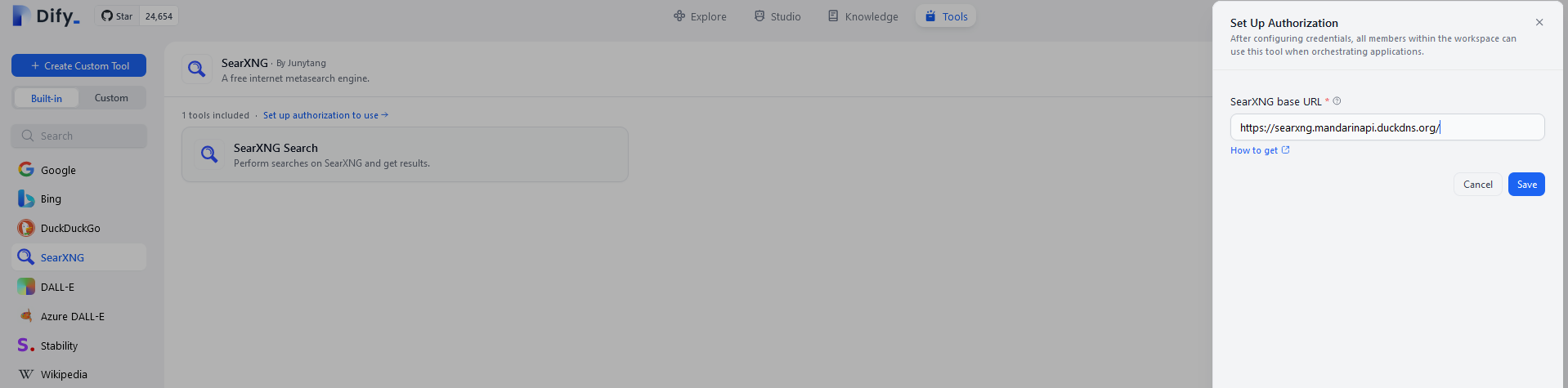

For example - You can combine DifyAI with SearXNG:

Yes, I have my local SearXNG instance with https provided by NGINX using DuckDNS

For a quick check, you can use this SearXNG together with DifyAI:

#---

#version: "3.7"

services:

searxng:

image: searxng/searxng

container_name: searxng

ports:

- "8080:8080"

volumes:

- "/home/Docker/searxng:/etc/searxng"

environment:

- BASE_URL=http://localhost:3003/

- INSTANCE_NAME=SearXNG

- FORMATS=html json #important so that it accepts difyAI requests

restart: unless-stopped

# networks: ["nginx_nginx_network"] #optional

# networks: #optional

# nginx_nginx_network: #optional

# external: true #optional

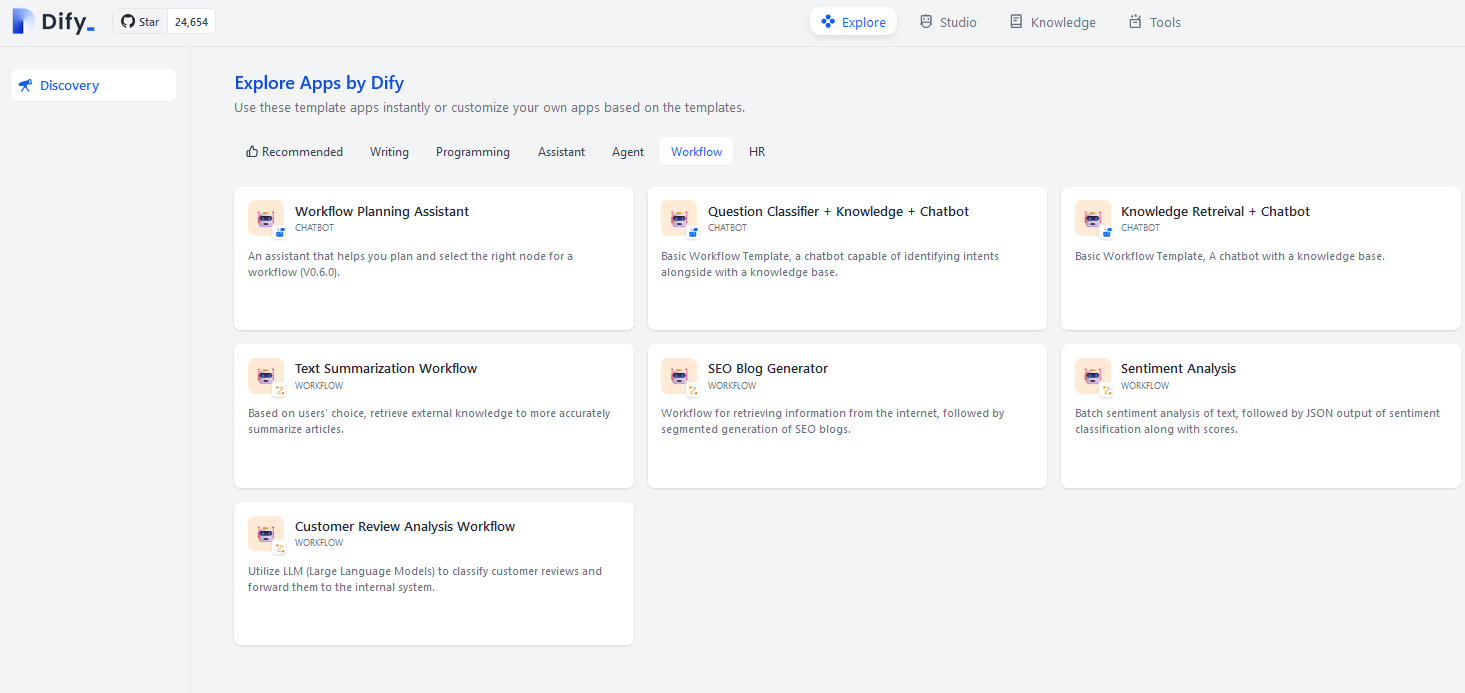

You can also use the sample apps that Dify AI provides:

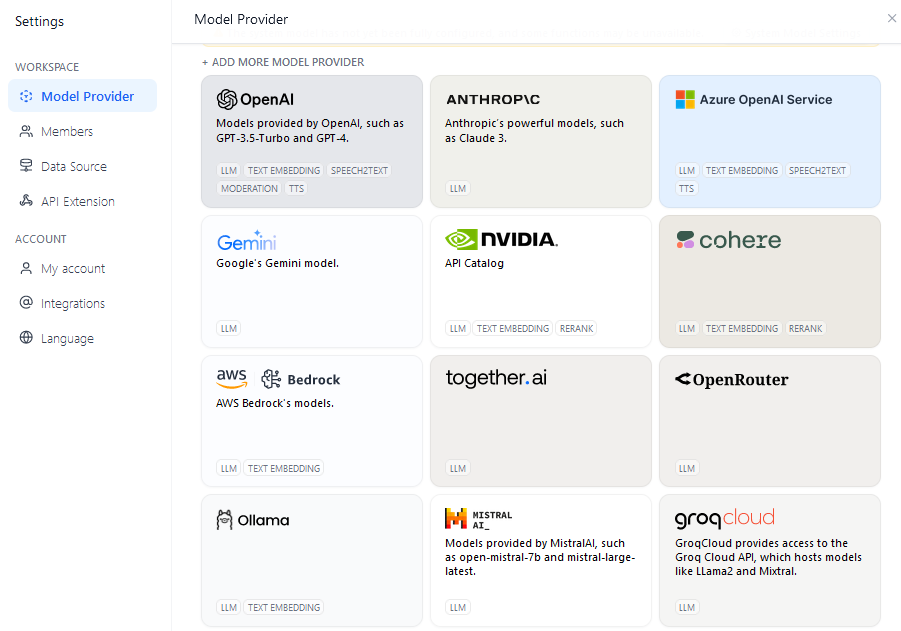

And as per the LLM’s, dont worry, you can use DifyAI together with Ollama:

FAQ

FREE Low Code AI Tools

Other Free and Open Source Low Code AI Tools:

- Flowise AI (🧠): A versatile low-code AI platform designed to simplify application development with large language models.

F/OSS Vector DBs

- ChromaDB (🔍): An open-source vector database for efficient management of unstructured data.

- Vector Admin (🛠️): A user-friendly administration interface for managing vector databases and more.

- And much more! (🔗): Explore additional F/OSS vector databases for AI projects.

Can I use Dify with a local LLM?

Yes. The model provider list includes Ollama and any OpenAI-compatible endpoint, so a local Ollama instance plugs in directly. Set the base URL to http://ollama:11434/v1 (when both containers share a network) and pick the model. PrivateGPT and TextGenWebUI also expose compatible endpoints.

Dify vs Flowise vs LangFlow — what’s the difference?

Flowise is more LangChain-native and lighter. LangFlow is a sibling to Flowise with a similar drag-and-drop UI. Dify is broader — chatbots, agents, full apps, plus a richer dataset/RAG layer. For pure LangChain pipelines, Flowise. For end-user-facing AI apps with auth and conversation history, Dify.

Is the Cloud version necessary?

No. Dify Community Edition is fully self-hostable under Apache 2.0, and the docker-compose stack covered here gives you the same UI as the cloud — minus the managed hosting and team plan features. For a home lab or solo developer, self-hosting is the obvious choice.

How do I expose Dify safely on the internet?

Behind Nginx Proxy Manager for HTTPS, or via Cloudflare Tunnel without opening any ports on your router. Make sure to set CONSOLE_API_URL, CONSOLE_WEB_URL, and the equivalent SERVICE_* variables in .env to your real domain — otherwise the UI generates broken links to localhost.

What does the resource footprint look like?

Dify launches several containers — API, worker, web, sandbox, vector DB, Redis, Postgres, plus optional plugin daemons. Plan on 2–4 GB RAM minimum. Run it on something more capable than a Pi 4 if you want it to be responsive.

Comments